Integrations

KubeSense supports multiple notification channels for delivering alerts to your team. Configure one or more channels and associate them with alert rules for flexible routing.

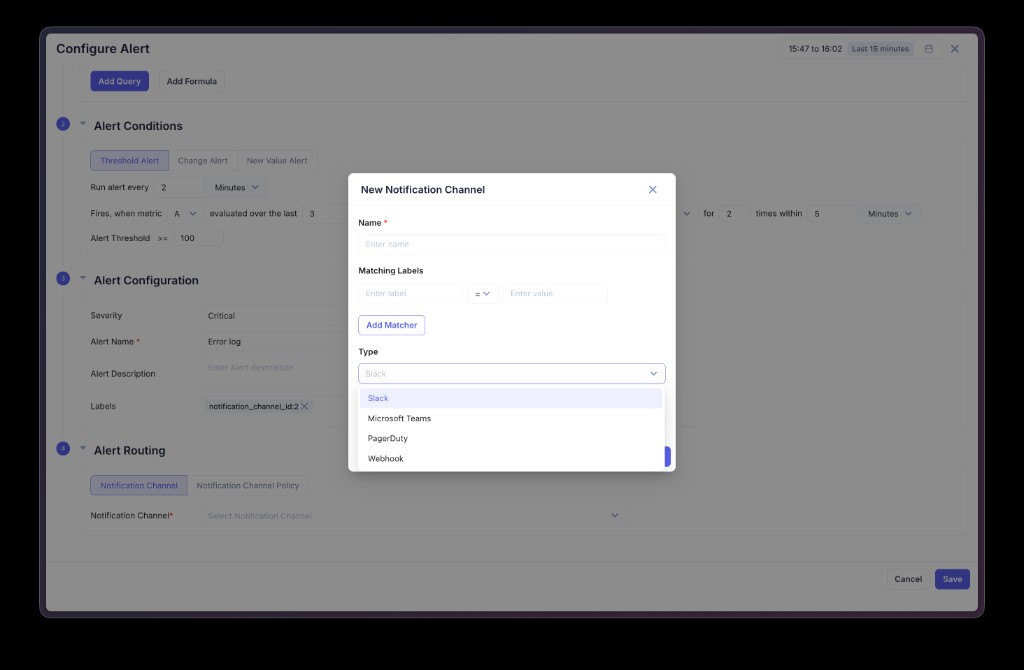

Creating a Notification Channel

Navigate to Alerts in the sidebar and open the notification channel settings. Click to add a new channel.

| Field | Description |

|---|---|

| Name | A unique name for the channel |

| Matching Labels | Optional key-value matchers for label-based routing (e.g., severity = critical) |

| Type | The channel type — Slack, Microsoft Teams, Email, PagerDuty, Datadog On-Call, Google Chat, or Webhook |

After selecting a type, provide the required configuration fields (see below), then save the channel.

Supported Channels

Slack

Send alert notifications to a Slack channel via an incoming webhook.

| Field | Required | Description |

|---|---|---|

| Webhook URL | Yes | Slack incoming webhook URL |

| Channel | No | Override the default channel |

Slack messages include alert name, status (firing/resolved), severity, value, threshold, description, labels, and a link to open the alert in KubeSense. When multiple alerts are batched, the title shows firing and resolved counts (e.g., [FIRING:6, RESOLVED:1] Logs Availability).

Microsoft Teams

Send alert notifications to a Microsoft Teams channel via a webhook connector.

| Field | Required | Description |

|---|---|---|

| Webhook URL | Yes | Teams incoming webhook URL (from a Teams Workflow or Power Automate connector) |

Teams messages use Adaptive Card formatting with the same information as Slack — alert name, status, severity, value, threshold, labels, and timestamps.

Deliver alert notifications via SMTP email to one or more recipients.

| Field | Required | Description |

|---|---|---|

| SMTP Host | Yes | SMTP server hostname (e.g., smtp.gmail.com, smtp.office365.com) |

| SMTP Port | Yes | SMTP port (typically 587 for TLS, 465 for SSL) |

| Username | Yes | SMTP authentication username |

| Password | Yes | SMTP authentication password or app-specific password |

| From Email | Yes | Sender email address |

| To Emails | Yes | List of recipient email addresses |

| Use TLS | No | Enable TLS encryption (recommended) |

PagerDuty

Create incidents in PagerDuty when alerts fire, and auto-resolve when alerts recover.

| Field | Required | Description |

|---|---|---|

| Integration Key | Yes | PagerDuty service integration key (Events API v2) |

Alert severity is mapped to PagerDuty severity levels. Resolved alerts automatically resolve the corresponding PagerDuty incident.

Datadog On-Call

Page a Datadog On-Call team directly from KubeSense alerts. Uses the Datadog On-Call paging API — no native integration required on the Datadog side, and works for any region (US, EU, US3, US5, AP1) or private Datadog deployment.

Prerequisites

Set up the following in Datadog before creating the KubeSense channel:

- API Key — Navigate to Organization Settings → API Keys. Create a new key or copy an existing one. This is the

DD-API-KEY. - Application Key with

on_call_pagescope — Navigate to Organization Settings → Application Keys. Create a new key and make sure theon_call_pagepermission is granted. This is theDD-APPLICATION-KEY. Without this scope the paging API returns403 Forbidden. - On-Call Team — Navigate to On-Call → Teams and click Set Up Team. Configure:

- Team members

- Escalation policy

- Schedules

- Notification methods (SMS, push, email) for each team member

- Team Handle — Note the team handle from the team page (e.g.

product-autopay). This is the identifier used to page the team.

Channel Configuration

| Field | Required | Description |

|---|---|---|

| API Key | Yes | Datadog API key (DD-API-KEY) |

| Application Key | Yes | Datadog Application key with on_call_page scope (DD-APPLICATION-KEY) |

| Team Handle | Yes | Datadog On-Call team handle (e.g. product-autopay) |

| API URL | No | Datadog On-Call base URL for your region. Leave blank for the US default. Examples below. |

| Default Urgency | No | high triggers immediate paging (default). low sends a non-critical notification. |

Region API URLs:

| Region | API URL |

|---|---|

| US (default) | https://navy.oncall.datadoghq.com |

| EU | https://navy.oncall.datadoghq.eu |

| US3 | https://navy.oncall.us3.datadoghq.com |

| US5 | https://navy.oncall.us5.datadoghq.com |

| AP1 | https://navy.oncall.ap1.datadoghq.com |

For private or proxied Datadog deployments, paste the base URL pointing to your tenant.

Per-Alert Urgency Override

To override the channel's default urgency on a per-rule basis, add an urgency label to the alert rule with value high or low. The first alert in a group with this label sets the urgency for the entire page.

Notification Content

Each page sent to Datadog includes:

- Title —

<alertname> [FIRING:N, RESOLVED:M] - Description — Multi-line summary with status, severity, summary, description, Alertmanager URL, and per-alert details (name, value, threshold, labels, start time). Truncated after the first 5 alerts.

- Tags —

source:kubesense,severity:<level>,alertname:<name>, andenv:<value>when present. - Target — The team handle configured on the channel.

Pages appear in Datadog → On-Call → Pages and follow the team's escalation policy.

Common Errors

| Error | Cause | Fix |

|---|---|---|

403 Forbidden — Failed permission authorization checks | Application Key missing on_call_page scope | Edit the App Key in Datadog and add the on_call_page permission |

404 Not Found on the page endpoint | Wrong API URL for your region | Verify the API URL matches your Datadog tenant region |

| Page accepted but no user notified | Team handle wrong, schedule inactive, or users have no notification methods | Verify team handle, on-call schedule, and that each team member has SMS/push/email configured |

Google Chat

Send alert notifications to a Google Chat space via an incoming webhook.

| Field | Required | Description |

|---|---|---|

| Webhook URL | Yes | Google Chat space webhook URL (must start with https://chat.googleapis.com/) |

Google Chat messages use Cards v2 format with alert name, status, severity, description, value, threshold, and labels.

Webhook

Send alert payloads as HTTP requests to any endpoint — useful for custom integrations, ChatOps bots, ticketing systems, or internal tooling.

| Field | Required | Description |

|---|---|---|

| URL | Yes | Target HTTP endpoint |

| Method | No | HTTP method (defaults to POST) |

| Headers | No | Custom HTTP headers (e.g., Authorization: Bearer <token>) |

| Timeout | No | Request timeout in seconds |

The payload contains the full alert context including name, status, severity, value, threshold, labels, and annotations.

Alert Routing

There are two ways to route alerts to notification channels:

Direct Assignment

When creating an alert rule, select a specific notification channel from the Notification Channel dropdown in the Alert Routing section. All alerts from that rule are sent to the selected channel.

Label-Based Routing (Notification Channel Policy)

Configure Matching Labels on a notification channel to enable automatic routing. When an alert fires, its labels are compared against the matching labels of all channels. If a channel's matchers match the alert's labels, that channel receives the notification.

This enables patterns like:

- Channel with

severity = criticalreceives all critical alerts - Channel with

team = platformreceives all alerts labeled for the platform team - Channel with no matchers acts as a catch-all for unmatched alerts

Multiple channels can match a single alert, allowing you to send the same alert to both Slack and PagerDuty simultaneously.

Testing Channels

After creating a notification channel, use the Test action to send a test notification and verify connectivity before associating it with alert rules.

Notification Content

All notification channels receive the same core alert information:

| Field | Description |

|---|---|

| Alert Name | Name of the alert rule |

| Status | firing or resolved |

| Severity | critical, warning, or info |

| Value | The evaluated metric value that triggered the alert |

| Threshold | The configured threshold and operator (e.g., greater_than 100) |

| Description | Alert description text |

| Labels | All labels attached to the alert (including group-by values for multi-series alerts) |

| Started | Timestamp when the alert started firing |

| Link | Direct link to view the alert in KubeSense |

For batched notifications (multiple alerts grouped together), the title includes firing and resolved counts — for example, [FIRING:6, RESOLVED:1] Logs Availability.